The dollar value of "official" external links: And other new research

A monthly overview of recent academic research about Wikipedia and other Wikimedia projects, also published as the Wikimedia Research Newsletter.

A paper titled "On the Value of Wikipedia as a Gateway to the Web",[1] presented at The Web Conference last year, examines how often external links on English Wikipedia are clicked, and "also sheds new light on the poorly understood role [Wikipedia] has as a provider not only of information, but also of economic wealth."

The study was based on internal data from a client-side instrumentation (originally gathered for a previous research publication that specifically focused on interactions with citation links), which captured reader clicks on three kinds of external links:

"During the period considered, Wikipedia had 5.3M articles that contained at least one of 63.1M external links (totaling 49.8M unique target URLs). [...] In total, 35.3M (56.0%) of these links appeared in references, 24.9M (39.5%) in article bodies, and 2.8M (4.5%) in infoboxes. Around 1.3M articles in English Wikipedia had an infobox with links, and the average number of links per infobox in these articles was 2.08."

During the time period studied (one month in 2019), "English Wikipedia generated 43M clicks to external websites, in roughly even parts via links in infoboxes, cited references, and article bodies". This corresponds to a much higher click-through rate (CTR) for infobox links (0.9%) than for article body links (0.14%) and reference links (0.03%).

Focusing on infobox links, the authors train a classifier to distinguish "official" links, defined as "the official website of the entity described in the respective article", which made up 0.8% of the 63.1 million links studied and had an even higher CTR (2.47%).

The researchers proceeded to analyze the CTR of these official infobox links in more detail, finding that it is "correlated strongly and negatively" with an article's length and popularity (number of pageviews), "possibly because longer articles, by offering more information, reduce the user’s need to gather additional information from external links, and because more popular articles are more likely to appear in shallower information-seeking sessions" according to previous research.

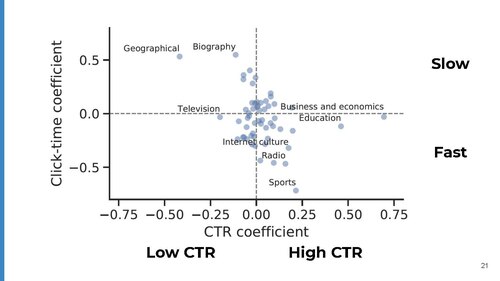

Next they examine how the CTR varies by article topic (while controlling for an article's length and popularity), finding

"... that internet culture—a topic held by most articles about websites—is indeed particularly over-represented among the articles with the very highest official-link CTR. Similar effects were observed for society (a loose mix of articles), sports, software, and entertainment, among others. On the contrary, we observed that geographical, biography, and television, among others, were particularly under-represented among the highest-CTR official links."

Furthermore, the study examined the "click time" (from opening an article to clicking an external link):

"The global median click time was 32.9 seconds (31.8 seconds for desktop, 34.4 seconds for mobile), with a much lower value for infobox links (18.7 seconds; 20.1 seconds for official links), and larger values for the article-body links (35.4 seconds) and reference links (51.8 seconds)."

The authors use the term "article body" as a catch-all for every location outside infoboxes and footnotes. This is inconsistent with Wikipedia's guidelines on external links, where that term excludes the separate "External links" section at the end of an article:in fact, the guidelines state that external links "normally should not be placed in the body of an article". As a consequence, the paper (unfortunately or perhaps fortunately) mostly does not provide information on whether external links that are placed higher up within the article text (in violation of the guidelines or exploiting one of their rare exceptions) may generate more traffic, apart from one partial result:

The short click time of infobox links, however, seems to be due to their prominent position within articles: when approximately controlling for position by considering only article-body links in the top 20% of the page, the median click time dropped to 22.2 seconds, only 10% longer than for infobox links.

Again analyzing by article topic, the researchers found that "clicks on official links to entertainment-related websites occurred faster, whereas links to websites on more classic encyclopedic topics, such as biographies, geographical content, history, etc., occurred more slowly."

They also note that

"Wikipedia frequently serves as a stepping stone between search engines and third-party websites. We captured this effect quantitatively as well as in a manual analysis, where we found that URLs that are down-ranked or censored by search engines, and thus not retrievable via search, can often be found in Wikipedia infoboxes, which leads search users to take a detour via Wikipedia. We conclude that Wikipedia regularly and systematically meets information needs that search engines do not meet, which further confirms Wikipedia’s central role in the Web ecosystem."

Lastly, regarding the economic value Wikipedia for website owners, the paper asks

"... how much money external-website owners would have to pay in order to obtain an equivalent number of clicks by other means, such as paid ads. In this spirit, we applied the Google Ads API to the content of official websites linked from Wikipedia in order to generate key words for sponsored search and estimated their cost per click at market price. We conclude that the owners of external websites linked from English Wikipedia’s infoboxes would need to collectively pay a total of around $7–13 million per month (or $84–156 million per year) for sponsored search in order to obtain the same volume of traffic that they receive from Wikipedia for free."

Other recent publications that could not be covered in time for this issue include the items listed below. Contributions, whether reviewing or summarizing newly published research, are always welcome.

From the abstract:[2]

"In this thesis, we have computationally analysed the language used in Wikipedia in order to find similarities between the language used in different articles. To do so, we have syntactically parsed articles of Wikipedia in different languages using UDPipe 2.0 and gathered the languages’ recurrent syntactic patterns using Grammatical Framework’s GF-UD. Then, we have compared the analyses with cosine similarity in two ways: based on dependency relations and based on linguistic patterns. We have seen that there is a basis for the Abstract Wikipedia project: there are syntactic similarities not only within one language, but also within multiple languages. In addition, we have found that semantically-related topics have a higher similarity than those which are not. Finally, we have gathered syntactic patterns of every language and compared them, which can constitute the basis of the creation of the Renderers for Abstract Wikipedia [a project to create a language-independent version of Wikipedia using structured data]."

From the abstract:[3]

"This paper analyses the online encyclopaedia Wikipedia using Michel Foucault’s (1926–1984) concept of heterotopia. In Foucault’s writings, heterotopias are both similar to and distinct from the conditions that give rise to them. The paper undertakes a case study of one entry on Wikipedia (the entry for the “Episteme”) focusing primarily on the main entry and the talk page. The methodology is content analysis with a directed approach: data were gathered in November–December 2020. The paper argues Wikipedia can usefully be analysed as a heterotopia because it exposes the contentious conditions of knowledge production, which is not standard practice for an encyclopaedia."

As explained in the paper, "Heterotopias are an alternative but not an idyllic alternative. They are possible rather than imaginary. Utopias are a departure from the present but heterotopias both engage with and question the present by enacting an alternative, destabilising established practices and understandings in the process. "

From the abstract and paper:[4]

"... we consider [ time series] models constructed with the help of dynamical systems that have relatively simple limiting behavior. Switching between different trajectories of the phase portrait, we obtain a high precision prediction. Moreover, the dynamical system approach provides the global qualitative picture of the model's phase portrait, and allows us to discuss multidimensional patterns and long-term properties of the process. The simple limiting behavior allows us to associate different trends with different process's realization scenarios that can be influenced by externalities.

We demonstrate these ideas using the examples of the Wikipedia's traffic of Readers, Contributors and Edits [using data for 2008-2019]. First, we consider the two-dimensional model, predicting the traffic of Readers and Edits. [...] Different trends (corresponding to different fixed points) can be associated with different platform's incentives. Then, adding the Contributors data, we discuss the three-dimensional model (more precise than the three-dimensional VAR). It provides a more accurate short-term prediction of Edits than the two-dimensional dynamic model. The global picture shows that the number of new Edits tends to decline in the future, while the number of new Contributors and Readers will grow in the long run. [...] This can probably be explained by the fact that many of the subjects, in which Readers are interested, have already been contributed to the Wikipedia platform, and there is no demand for the new Edits. However, the Contributors will continue to correct some articles, and the Readers will be visiting the platform for the references.

Discuss this story